Now that VMworld 2013 is now over and a number of key product updates announced I thought it was worth highlighting some of enhancements and improvements that stretch across the entire stack with vSphere 5.5. As you can see from the summary below this release is a major step forward to VMware’s strategic move toward the fully software defined data center.

Flash Read Cache

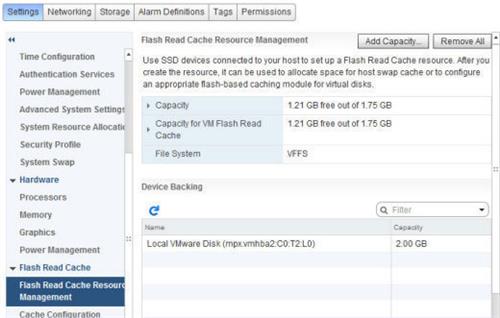

This completely new feature in vSphere 5.5 provides a mechanism for utilizing fast SSD as a Linux host swap cache or to provide improved read speed for a host virtual disk. To make use of this feature, you first have to create a new resource, as shown in the screenshot. Then you must connect to that resource from each VM needing access. For a Linux guest, this would consist of configuring a swap disk to use part of the read cache. Another option would be to enable caching for a VM.

Application HA

Application HA

Also new in vSphere 5.5 is policy-based application monitoring and automatic remediation. Based on vFabric Hyperic, Application HA supports a short list of off-the-shelf applications, including Microsoft SQL Server, SharePoint, IIS, and the Apache Web Server, and makes it possible to attempt a restart when a failure is detected. If the application restart is unsuccessful, the feature will leverage vSphere HA to restart the VM on the same host after a predetermined amount of time. If that process fails, the VM will be restarted on another host.

VMware has added a number of improvements at both the VM and hypervisor level to help improve overall latency. At the VM level, this consists of a single setting to indicate to vSphere the sensitivity to latency. For high sensitivity applications, the underlying hypervisor can do things like bypass the CPU scheduling algorithms and dedicate one or more CPU sockets exclusively to a single VM. Additional actions include reserving memory for a latency sensitive VMs and disabling networking features, such as coalescing and LRO vNIC support for predictable network response.

The 2TB limit on VM disks is starting to pinch. With vSphere 5.5, the maximum size for VMDK files increases all the way up to 62TB. Why that particular number you might ask? VMware settled on something smaller than 64TB to allow room for snapshots and any other required services while staying under a 64TB volume size. Existing VMDK files will have to be offline in order to be expanded. The new huge VMDK file size will not be supported in the initial release of the VSAN product, however — expect that to come at a later date.

One of the limitations of previous versions of VMware virtual machines was the small number of virtual devices supported. The vSphere 5.5 release introduces Virtual Hardware 10, which adds SATA-based virtual device nodes via AHCI (Advanced Host Controller Interface) support. AHCI support is required for OS X guests going forward due to Apple’s elimination of support for IDE devices. It also makes it possible to connect up to 120 devices per VM.

Virtual Hardware 10 also adds support for a number of new graphic capabilities. First up is support for both AMD and Intel GPUs. Included in this update is the ability to vMotion a VM between disparate hardware platforms including disparate GPU support. This previously required similar hardware for the vMotion to work. Also included in this release is support for OpenGL version 2.1, which is the default graphics API used in popular Linux distributions including Fedora 17 and Ubuntu 12.

In really large data centers, it can be a challenge to identify the appropriate class of storage for a specific purpose. In this screenshot, you can see the name Print Server being assigned to a new VM Storage Policy. This creates a new policy that can be applied to all new virtual machines needing storage for a print server, making storage provisioning much simpler.

LACP (Link Aggregation Control Protocol) allows you to aggregate the bandwidth of multiple physical NICs. Whereas vSphere 5.1 supported only one LACP group per distributed switch, severely limiting your aggregation options, vSphere 5.5 supports up to 64. Plus, you can now save LACP configurations as templates to use on other hosts, and you can draw on 22 different hashing algorithms (versus just IP hash in 5.5) to distribute load across links.

Sometimes it becomes necessary to capture the packets going across the network to track down a problem. The latest version of vSphere includes a an enhanced version of the open source packet analyzer tcpdump and a number of options for mirroring ports to capture traffic in a variety of places. You can capture packets from virtual NICs, virtual switches, and uplinks at the host level as well.

Moving network traffic from host A to host B in a virtual network now resembles what you would expect to see on a physical network with sophisticated switches. The vSphere 5.5 distributed switch now includes the ability to shape and direct Layer 3 network traffic using the Differentiated Services Code Point field in the IP packet header. It’s also known as DiffServ and acts like the access control list feature found on many high-end physical switches. Individual rules can be configured on a distributed switch to handle specific types of traffic in order to provide a higher quality of service when necessary.

The vSphere Web Client has seen a number of enhancements in this release. Many reflect user feedback, such as the “10 most recent objects” list shown in the screenshot. Other improvements to the user experience include the new drag and drop support and the ability to filter search results for large installations.

The vCenter Server Appliance, meanwhile, gets a scalability boost. Previous versions supported a limited number of hosts and VMs, but these limits have been increased to 500 hosts and up to 5,000 VMs.

VMware has also poured a good deal of effort into making vCenter Single Sign-On simpler to install and easier to scale across multiple vCenter Server instances. Version 5.5 will even include a suite of diagnostic tools.

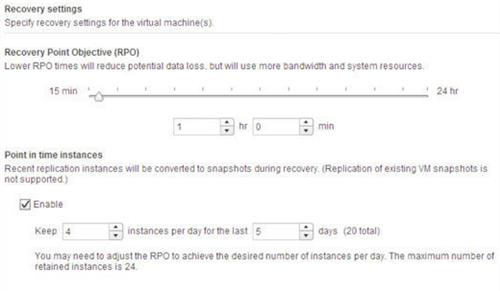

Previous versions of vSphere Replication kept only the most recent copy of a virtual machine. Version 5.5 can keep up to 24 historical snapshots. You could retain one replica per day for 24 days, or one per hour for 24 hours — however you want to slice it. Recovery always draws on the most recent copy, but from there, you can use the snapshot manager to revert to any other point in time.

Source: networkworld